About Kudan

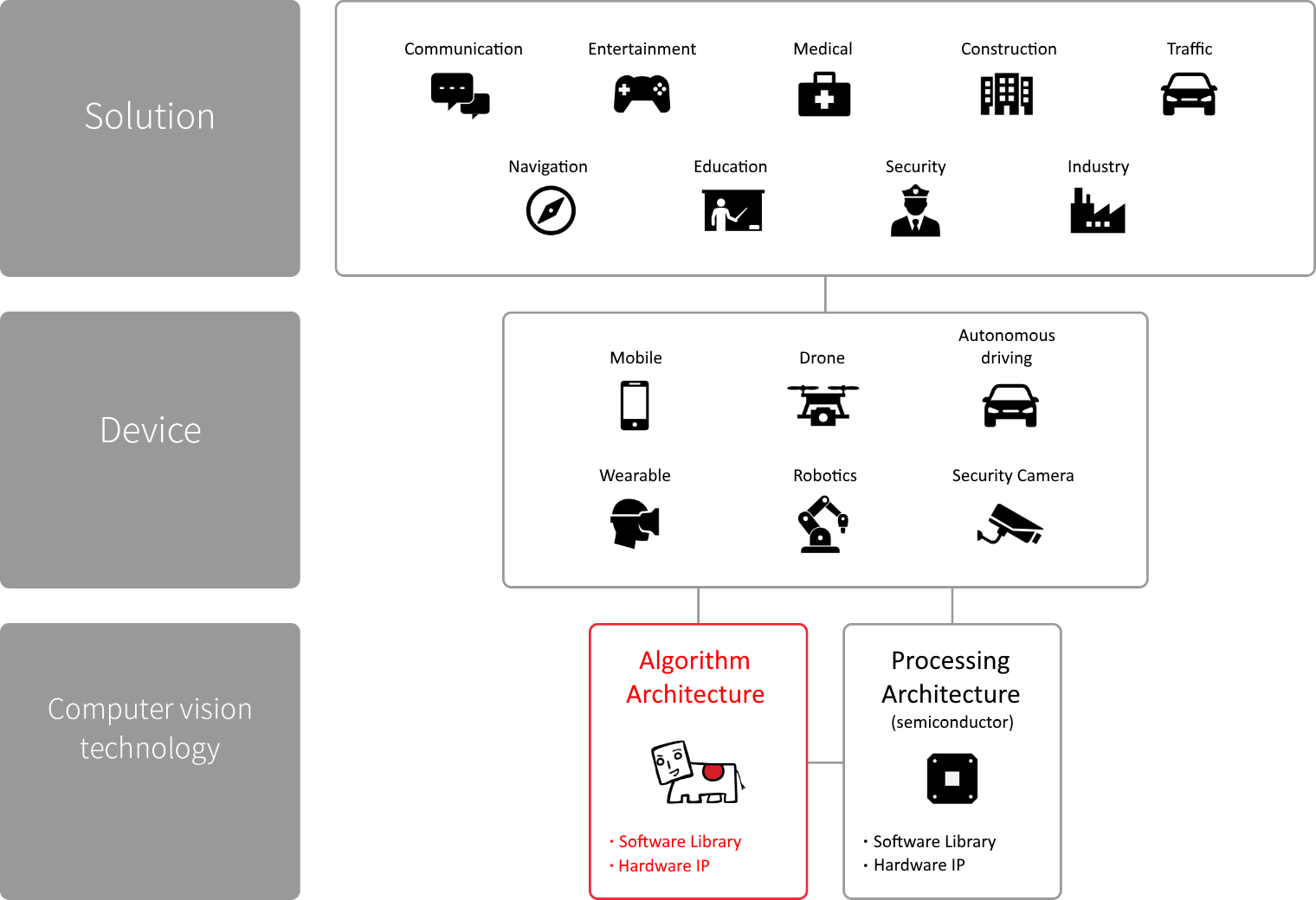

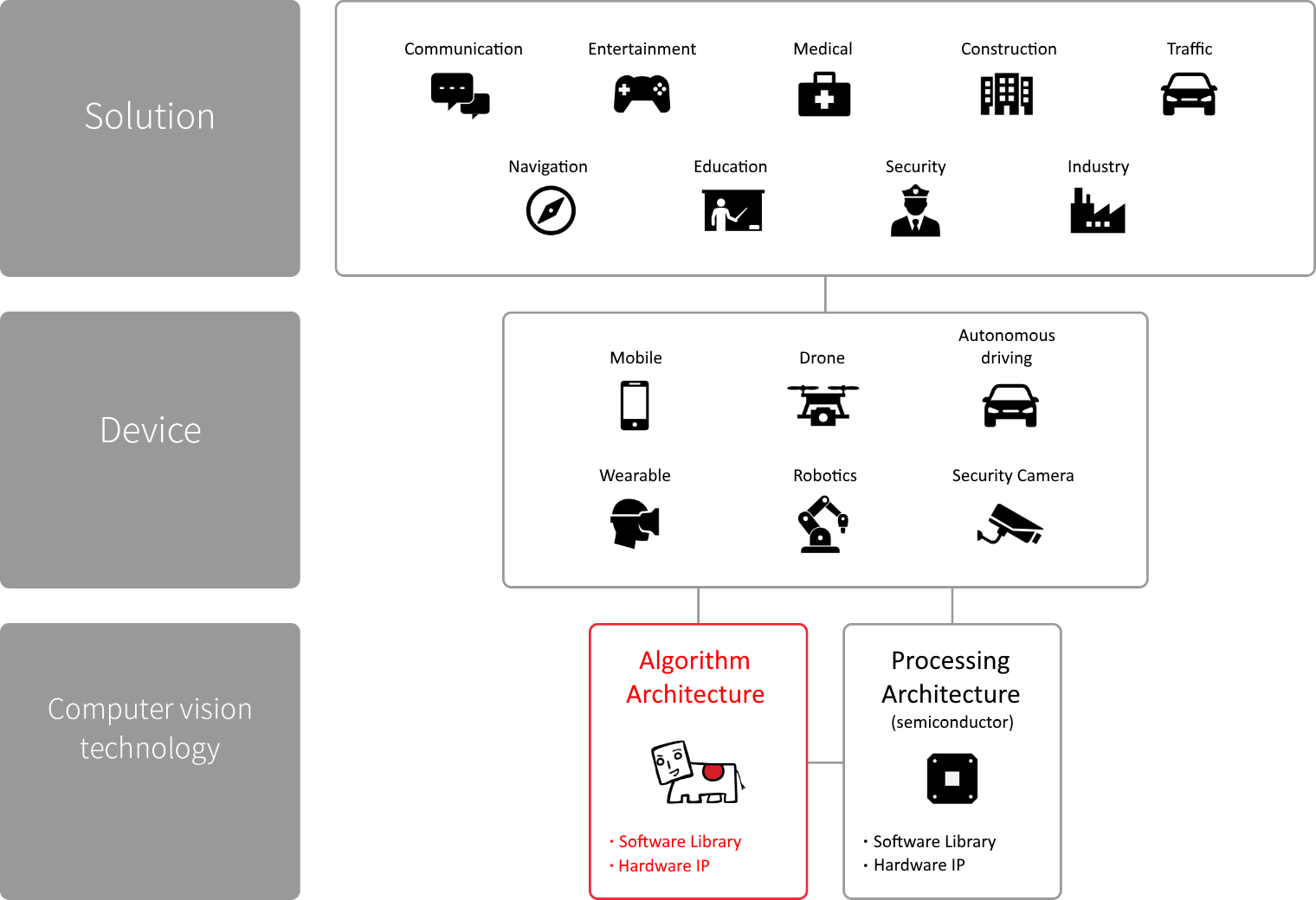

Computer’s “eye”, camera, has been way better than human’s eye for so many years. However, computer’s “vision”, computer

vision algorithms, is still primitive and far from the level of human’s, due to the fundamental of the algorithms.

In order to accelerate the evolution of applied technologies of Computer Vision, Kudan focuses on developing fundamental

modular algorithms, and designing versatile architecture enabling optimization and acceleration on processing architectures,

to be the fundamental IP in the industry. Through all camera equipped devices, Kudan’s technology will realize true “vision”

for computer, to support all the autonomous and interactive IT solutions.

What is KudanSLAM?

Versatility

Hardware and operating system agnostic:

The core codebase can target most processor architectures, and there is no reliance on the presence of operating system

functionality. Multiple processor classes can be utilized, ranging from low-powered general purpose to highly custom

DSPs. A large variety of hardware sensors are supported, ranging from monocular and stereo cameras, up to visual-inertial

depth cameras.

Not targeting a particular use case:

Our SLAM is designed to be as general purpose as possible. It can be used equally well in a variety of situations, ranging

from mobile positional tracking through to autonomous driving.

Fully configurable and easy to integrate:

Every aspect of the system is highly configurable and exposed via a simple-to-use API, allowing easy tuning to the target

hardware and use case.

Modularity

High level SLAM:

Different approaches to tracking and mapping are available, as well as modules such as loop detection and closure, relocalization

and bundle adjustment.

Mid-level computer vision:

Efficient implementations of various point matching mechanisms, stereo matchers and pose estimation. All are highly configurable

and optimized, utilizing better algorithms unavailable in the public domain.

Low-level image processing and maths:

Highly optimized versions of common vision processing building blocks such as various blurs, interpolations and image warps.

These are typically SIMD optimized and provide far superior performance compared to OpenCV. We also provide our own linear

algebra library.

Companion modules:

While our focus is on SLAM itself, it’s often useful to have different modules to help with integration. We provide a GUI

library designed for cross-platform computer vision debugging and as well as modules to work with the generated SLAM

maps.

Contact us for any questions, inquiries regarding Kudan AR SDK.